Part 1: Business Acumen & Problem Scoping

Starting without a clear business objective

Diving into the data before understanding what the business actually wants to achieve.

Answering the wrong question

Solving the problem you think is interesting, rather than the one the stakeholder asked.

Ignoring the context of the data

Analyzing healthcare data the same way you analyze e-commerce data. Context is everything.

Not talking to domain experts

Trying to guess what a specific column means instead of asking the team who generates it.

Failing to define KPIs early

Analyzing success without agreeing on what "success" actually looks like.

Confusing correlation with causation

Assuming that because ice cream sales and shark attacks rise together, one causes the other.

Overpromising results

Telling stakeholders you can predict customer churn with 99% accuracy before seeing the data.

Ignoring the "So What?" factor

Presenting findings without explaining why the business should care.

Treating all metrics as equally important

Giving equal weight to vanity metrics and actionable metrics.

Failing to align with stakeholders

Building a report in a vacuum only to find out it doesn't fit the workflow.

Not understanding the revenue model

Analyzing metrics without understanding how the company actually makes money.

Working in a silo

Not sharing your approach with other analysts, leading to duplicated efforts.

Forgetting the target audience

Designing a highly technical dashboard for a CEO who just wants three key numbers.

Being a "ticket taker"

Just fulfilling data requests blindly instead of acting as a strategic thought partner.

Part 2: Data Collection & Cleaning

Trusting data blindly

Assuming data is clean just because it comes from a "pristine" data warehouse.

Not checking for missing values

Running aggregates without realizing a large portion of your data is NULL.

Mishandling nulls

Replacing NULL with 0 indiscriminately, which artificially tanks your averages.

Ignoring outliers

Leaving massive anomalies in your dataset without investigating why they are there.

Deleting outliers blindly

Erasing anomalies just to make your chart prettier; sometimes true insight lies in the outlier.

Messing up time zones

Mixing UTC, EST, and PST in the same timestamp column.

The Cartesian Explosion

Doing a SQL JOIN without checking for duplicate keys, ballooning your row count exponentially.

Not standardizing text

Treating "NY", "New York", and "ny" as distinct entities.

Failing to document cleaning steps

Cleaning data manually without logging the steps, making results impossible to reproduce.

Using outdated data

Pulling a report based on a stale database snapshot.

Ignoring survivorship bias

Only analyzing active customers and ignoring the data of those who churned.

Not verifying data completeness

Analyzing a "full year" of data that unexpectedly ends in November.

Hardcoding values

Writing specific dates or IDs in scripts that will break in the next cycle.

Ignoring data lineage

Not knowing where your source data comes from or how it was transformed.

Assuming data is 1:1

Not checking for duplicates in keys that should be unique.

Part 3: Statistical & Analytical Errors

P-hacking

Torturing data by testing thousands of variables until you find a statistically significant result by pure chance.

Ignoring statistical significance

Declaring a winner in a marketing campaign when the difference is within the margin of error.

Not testing model assumptions

Applying linear regression to data that is clearly non-linear.

Overfitting the model

Building a complex model that perfectly predicts the past but fails miserably in the future.

Underfitting the model

Using a model too simple to capture the underlying patterns.

Confusing % with percentage points

Failing to distinguish between relative increases and absolute differences in rates.

Calculating averages of averages

A mathematical sin that leads to heavily skewed and inaccurate final numbers.

Misinterpreting A/B test results

Reacting to daily fluctuations instead of waiting for stable results.

Stopping A/B tests too early

Ending a test the second it reaches significance, ignoring the required sample size.

Not accounting for seasonality

Panicking about a drop in sales in January without comparing it to previous years.

Using mean when median is better

Reporting averages in datasets with massive outliers (like income).

Ignoring the distribution

Looking only at the average and failing to realize the data is bimodal.

Simpson’s Paradox ignorance

Failing to see that a trend appearing in groups disappears when they are combined.

Cherry-picking data

Only selecting specific timeframes that support your preconceived hypothesis.

Focusing only on the "happy path"

Analyzing successful user journeys while ignoring errors and drop-offs.

Part 4: SQL, Python & Coding Pitfalls

Using SELECT * in production

Wasting compute power and money by querying columns you don't need.

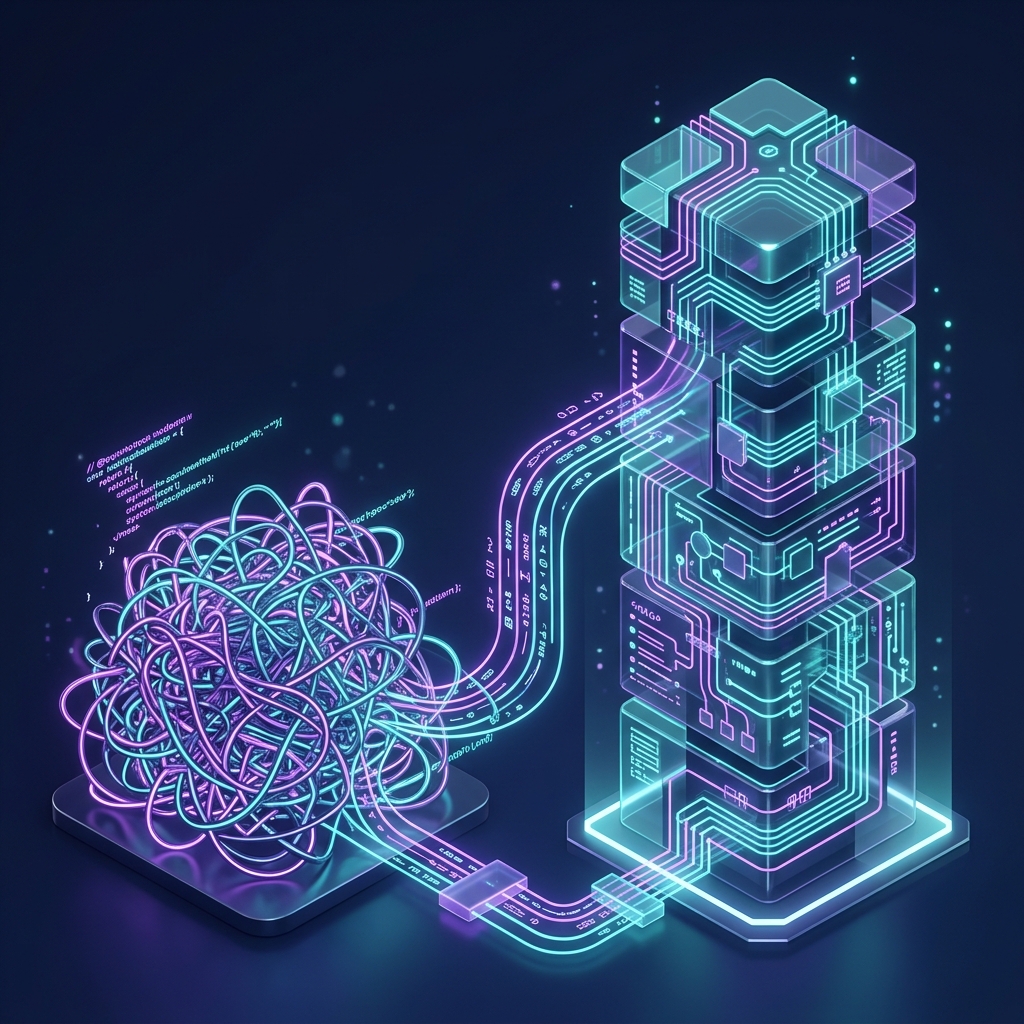

Writing monolithic SQL queries

Writing hundreds of lines without CTEs, making debugging impossible.

Not commenting code

Returning to a "genius" script months later with no idea how it works.

Ignoring version control

Naming files "analysis_final_V2_REAL.sql" instead of using Git.

Not optimizing for performance

Using inefficient logic that causes queries to run for hours unnecessarily.

Inconsistent formatting

Mixing capitalized and lowercase SQL keywords, making code exhausting to read.

Relying on Excel for big data

Trying to open multi-million row CSVs in Excel and crashing your system.

Not backing up scripts

Keeping all your code exclusively on your local desktop.

Meaningless variable names

Naming variables df1, temp, data_2, and x.

Violating DRY (Don't Repeat Yourself)

Copy-pasting the same block of code instead of writing a function.

Ignoring error handling

Writing scripts that break entirely when meeting a single unexpected value.

Running heavy queries during peak hours

Locking up the production database during high-traffic business hours.

Misunderstanding JOINs

Using INNER JOIN when you needed a LEFT JOIN, dropping thousands of records.

Assuming indexing exists

Querying massive tables without filtering on partitioned columns.

Part 5: Data Visualization & Dashboards

Using pie charts for everything

Especially with more than 4 slices. Use a bar chart instead.

Using 3D charts

They distort data and make it harder to read correctly.

Dashboard clutter

Cramming 25 charts onto one page. Less is almost always more.

Bad color choices

Ignoring colorblind users or using rainbow palettes for sequential data.

Truncating the Y-axis

Starting at 50 instead of 0 to exaggerate a tiny difference.

Forgetting to label axes

Leaving the audience guessing what the numbers represent.

Missing legends

Using colors without explaining what they signify.

Relying on hover-overs for crucial data

Making stakeholders dig for the main point of the chart.

Aesthetics over readability

Creating "modern art" at the expense of clear data transmission.

No clear takeaway

Failing to use titles or annotations to tell the user what they are seeing.

Abusing dual axes

Implying a relationship between unrelated metrics on different scales.

Not designing for the medium

Building desktop-only dashboards for mobile-first executives.

Overusing gauges

Speedometers take up too much space for too little info. Use bullet charts.

Using static charts for deep-dives

Providing a PNG when the user needs to drill down into the data.

Part 6: Communication & Storytelling

The "Data Dump"

Sending a massive spreadsheet without any summary or context.

Using overly technical jargon

Discussing "heteroscedasticity" with the Head of Marketing.

No actionable recommendations

Pointing out a problem without offering a potential solution.

Hiding bad news

Sweeping negative results under the rug to please stakeholders.

Skipping the presentation practice

Stumbling through insights because you didn't rehearse the narrative flow.

Reading the slides

Reading numbers out loud instead of adding meaningful context.

Arguing over semantics

Getting defensive about definitions during a critical presentation.

Ignoring the "Next Steps"

Ending a presentation without a clear plan of action.

Death by PowerPoint

Creating a 50-slide deck when a 1-page summary would suffice.

Assuming background knowledge

Jumping into complex analysis without reminding the audience of the goal.

Getting defensive when challenged

Taking questions about data validity as personal attacks.

Part 7: Career & Mindset

Growth Mindset

Stopping the learning process

Thinking you "know it all" in an industry that evolves daily.

Imposter syndrome paralysis

Being too afraid of making a mistake to share your insights.

Chasing the newest tool

Spending weeks on hot frameworks instead of mastering SQL and logic.

Not building a portfolio

Relying solely on a resume rather than showcasing tangible projects.

Failing to network internally

Ignoring teams outside the data analyst shell.

Refusing to ask for help

Spinning your wheels for days on a bug that a senior could solve in minutes.

Not tracking your ROI

Failing to document how your analyses saved or made money.

Hoarding knowledge

Refusing to document processes to feel "irreplaceable."

Looking down on data engineering

Thinking pipeline work is "beneath you."

Ignoring data privacy (GDPR/CCPA)

Casually emailing unencrypted PII to coworkers.

Working into burnout

Saying "yes" to every ad-hoc request until you're working 70-hour weeks.

Focusing on tools over logic

Believing Python is the answer when simple logic would suffice.

Skipping peer reviews

Pushing directly to production because "it's a simple change."

Taking data discrepancies personally

Feeling crushed when a dashboard number doesn't match a legacy system.

Poor expectation management

Promising a report in an hour when data cleaning will take a week.

Lacking empathy for the end-user

Getting frustrated when stakeholders struggle to use your dashboard.

Forgetting the human element

Treating data as strictly numbers, forgetting the people behind them.

Giving up when it’s messy

Data is messy. Embrace the chaos, clean it up, and find the story.